We've been shipping features to make it easier to evaluate Agent Experience (AX) and act on what we find — so your tool is truly agent-ready.

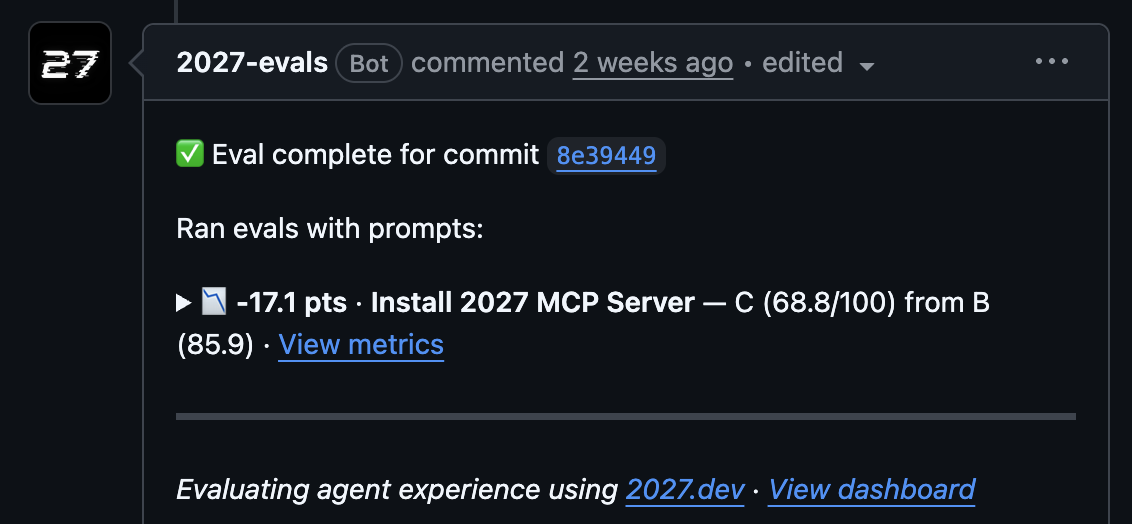

2027 × GitHub Connect

Our GitHub app runs evals on every docs PR and posts results as a comment, so your team can see when Agent Experience regresses and fix issues before they go live. Install the app in Settings, then use Prompt → Configure to tie a prompt to a docs repo.

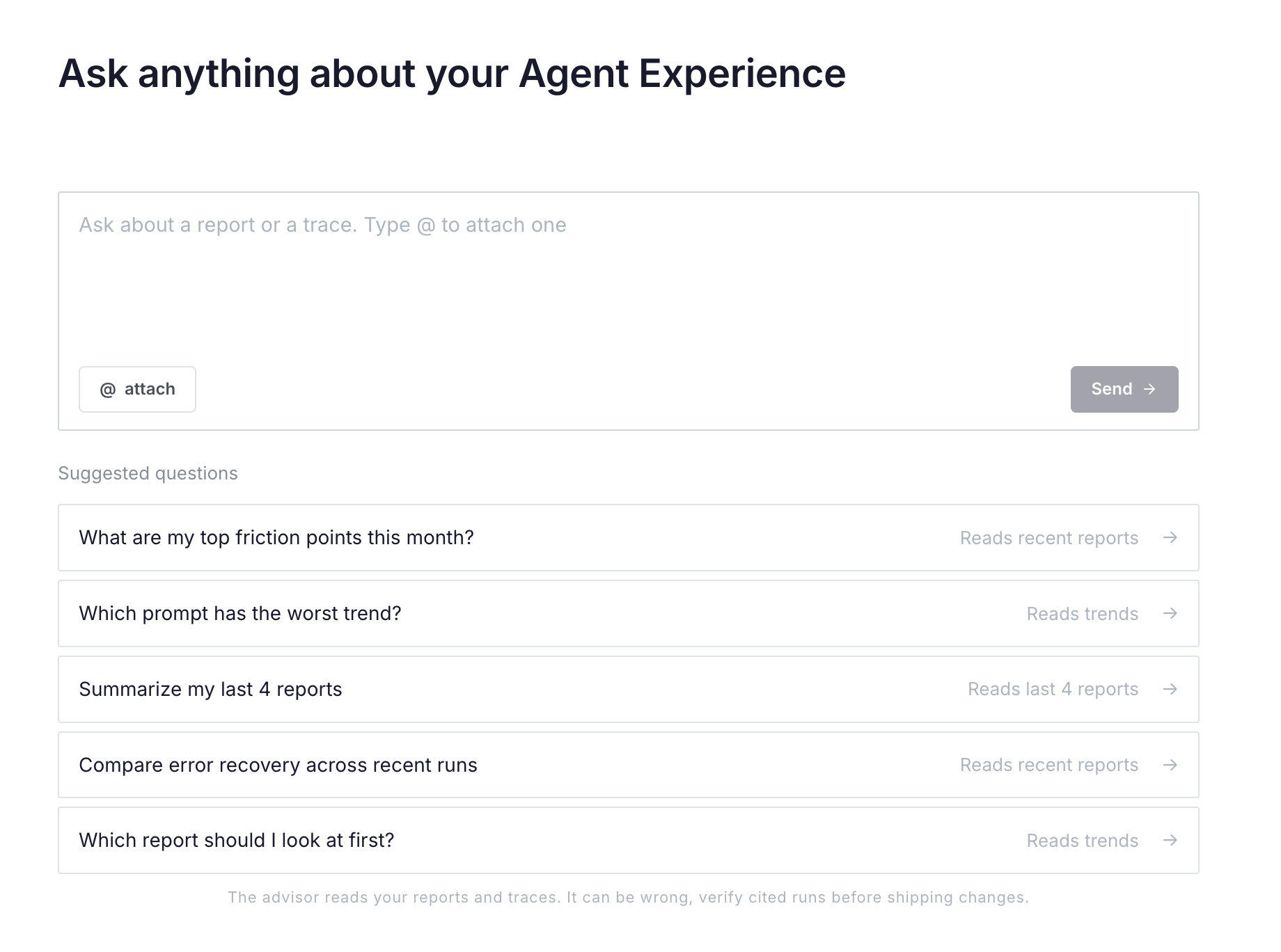

Ask 2027

Chat directly with your eval data. Ask things like "Where did agents get stuck last week?" or "What's the biggest issue to fix?" and get answers across all your runs — or a specific subset — without opening each report individually.

MCP integration

Many of you prefer staying in the terminal, so we built for that too. With 2027 MCP, you can request evals, create new prompts, and spot friction trends across runs without leaving your editor.

Competitive intel

Know exactly how you stack up. Add competitors to any prompt and we'll benchmark them on the same scenario — see where you lead, where you trail, and how each tool is trending over time. Use Prompt → Configure to add competitors.

Coming soon

- Revised AX methodology. The space is evolving fast and our measurement approach is evolving with it. More soon.

- One-click prompts for each recommendation. Fixing friction should be easy. We'll generate a ready-made prompt you can paste directly into your agent based on the friction we catch during runs.

- Impact projections. Each recommendation will be paired with an estimated improvement, like "~30s faster" or "50% fewer errors", so it's easier to prioritize fixes.

Thoughts?

We're always here if you have any thoughts on what we can do better. Reach out at mika@2027.dev or DM me on X anytime — @heymikasagi.