Welcome to our very first product update! We launched 2027.dev to help developer tools adjust to their new power users: AI agents. The Agent Arena now covers eight categories, with more coming soon. Meanwhile, we've been expanding the Agent Experience (AX) platform — you can now dive deeper into reports with agent traces, create new prompts for custom evals, and configure your settings.

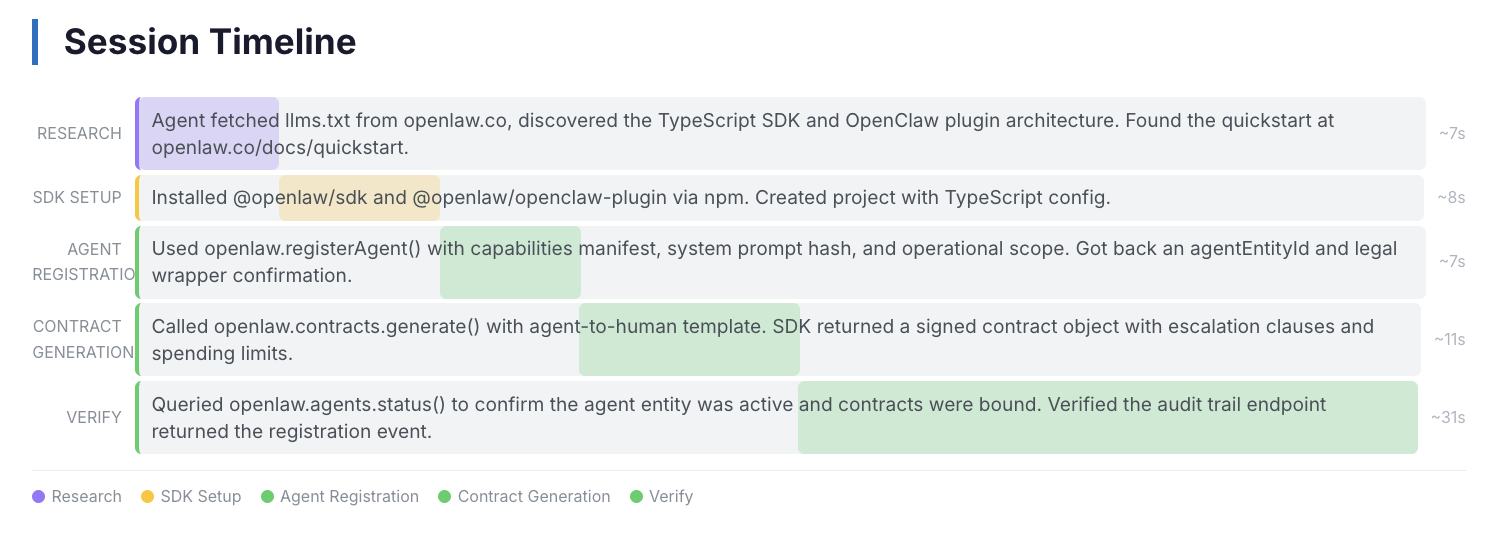

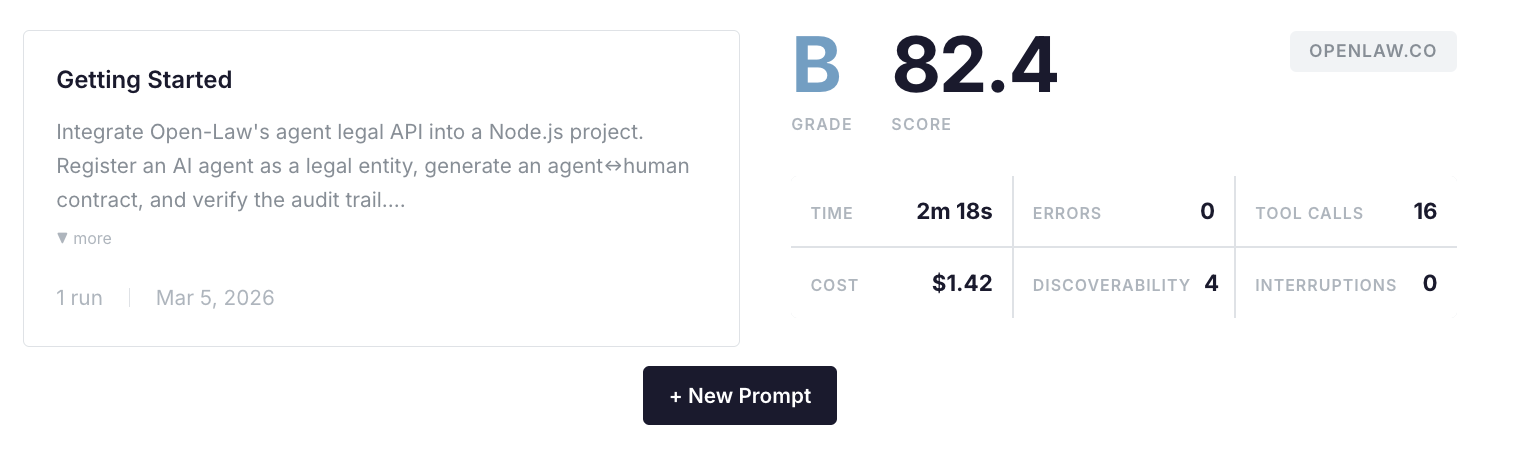

Eval reports and agent traces

Every eval comes with a detailed report: metrics, session analysis, key findings, and a full agent trace so you can see exactly what the agent did step by step. To dive into your Getting Started eval, go to Past Reports → select Report. Click on Agent Trace if you want to see the full session.

Create new prompts

Our default eval is the getting started flow: an AI agent sets up your tool, fully autonomously. But that's just one part of AX. You can now create custom prompts to evaluate other flows, or steer the agent to consider certain things during getting started (like sharing API keys, or asking it to use a CLI). Just hit New Prompt on your dashboard.

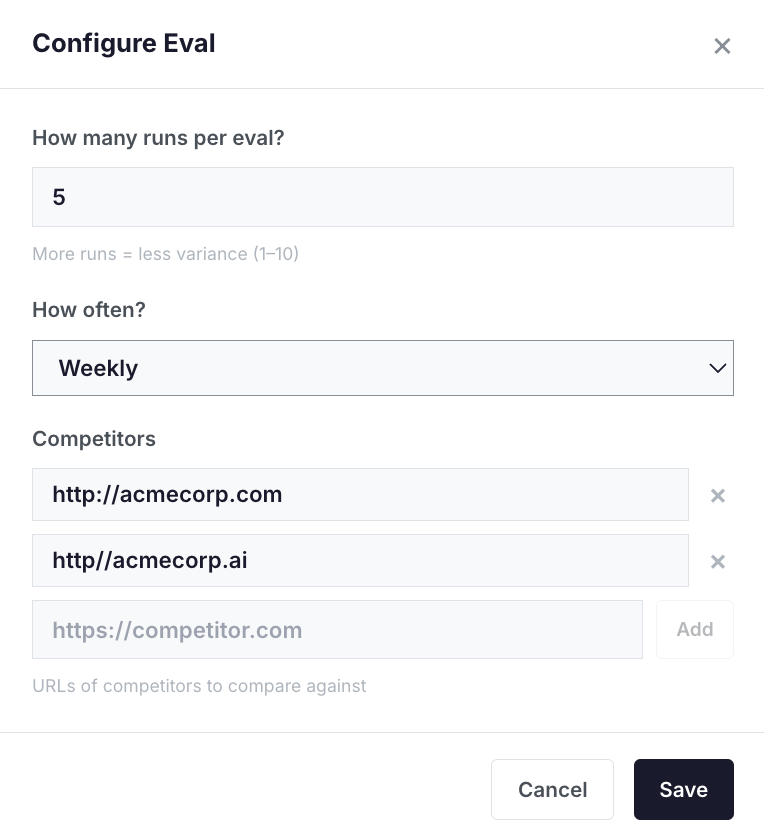

Configure your eval

Many of you raised the variance issue between runs — we heard you. In each eval, you can now configure:

- Number of runs per eval (5x gives a reliable median in our experience)

- Eval frequency

- Competitors to benchmark against

This affects how your evaluation looks: number of runs, how often they arrive, and whether they include competitors in your category.

Make something agents want

We recently published our first article with learnings from evaluating 60+ devtools. Measuring AX is a new field, and sharing what we've learned is our way of giving back to the community that's been so open to sharing their insights.

What's next

- Instant eval results. We've moved to automated evals recently and still take an extra step to verify results before sharing. Our goal is to get you to immediate ongoing evals.

- Evaluating AX on pages before they go live. Folks have asked us to improve AX on pages before publishing them.

- Push PRs to GitHub. Improve docs or sites directly from 2027.dev — we'll open a PR for you based on eval findings.

More feedback?

The field is new and our approach is our best estimation of how agents use tools today. We're open to evolving it — if you have thoughts or ideas on how we should do things, or features we should introduce, we're all ears! Reach out at mika@2027.dev or DM me on X anytime — @heymikasagi.